Hello everyone!

Today we are going to talk a little bit about the artificial intelligence of the characters and how the animations are used to make the characters move and interact in the 3D world, seamlessly.

This is the continuation of the previous article, in which we talked about how to make the characters feel alive with animations (here). I recommend you to read it before diving into this one. It will help you understand the role of the animations in the artificial intelligence behaviours.

Interacting with objects

Animations that require interactions with objects, are the most difficult behaviours for the artificial intelligence applied to animations. On one hand it requires that each object has its own skeleton, and on the other hand it requires a synchronization with the character’s animation.

This synchronization happens at two levels:

- Space: The character must begin to perform his animation from a specific point of origin, so that his positions fit properly with the shape and position of the object which he is going to interact with. We call this point, the “interaction point”.

- Time: Both the character and the object must have animations with the same duration. It should also start to play the two animations at the same time, to really make it feel like the character’s movement has an impact on the object it is interacting with.

The interaction point is a really important element! We will talk about it later in this post.

Designing the animations to be flexible

When you are designing a tycoon game, animations are a key part of the leveling process. More specifically: their duration. But the duration is something that is not known at the time of making the animation, so we generate the animations with a mechanism that allows us to define later how long they last. Then, we can tweak this in the leveling phase of the development.

The mechanism consists of generating the animations with tree phases of key poses:

- Start phase: The first frames of the animation start in an idle pose and are located in the interaction point. The goal is to get the character to the position where the action will really begin.

- Loop phase: The main action happens in the central frames of the animation. In the case of the bench press, the action is to lift the weights. The trick is to prepare both the first and last frames of this segment of the animation, so that it can be played repeatedly as many times as we want without seeing any jump.

- End phase: The first frames of the animation begin in the last pose of the loop and end by leaving the character at the end position of the animation. In the case of the bench press, the soldier gets up and reaches an idle pose.

Example:

We adjusted the leveling so that the character spends 30 seconds doing the bench press exercise and gains 1 experience point in strength each time that the exercise is performed.

When the character’s AI decides that he is going to perform this exercise, the animation will start and it will reach the animation loop, which will be played for 30 seconds. After that time, the character will gain 1 XP in the strength stat. Then the animation will finish the loop to end with the character getting up from the exercise machine and going away to another action.

Artificial intelligence and animations

The artificial intelligence of the characters is closely related to their animations. In order to make the characters feel alive, we divide their intelligence into two groups of behaviors:

- Navigation: Everything that has to do with moving from one point to another, either running or walking. This includes being still with an idle animation. This part of artificial intelligence uses various animations and mixes them. It also alters their speeds so that everything fits with the movements made by the character through the 3D space.

- Action playback: When a character is going to interact with an object, the predominant behavior is simply to play the animation. Any displacement of the character that occurs at this time will be determined by how the bones move within the animation, but the logical entity of the AI remains static and waiting until the end of the animation.

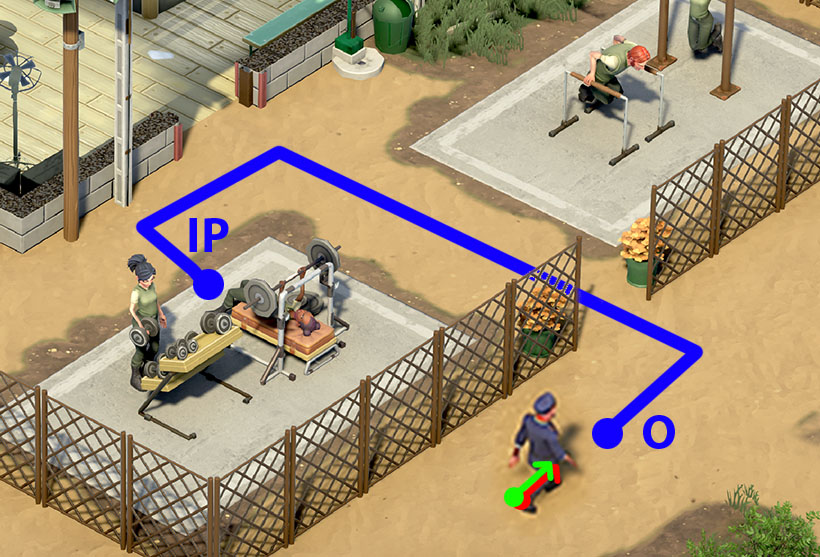

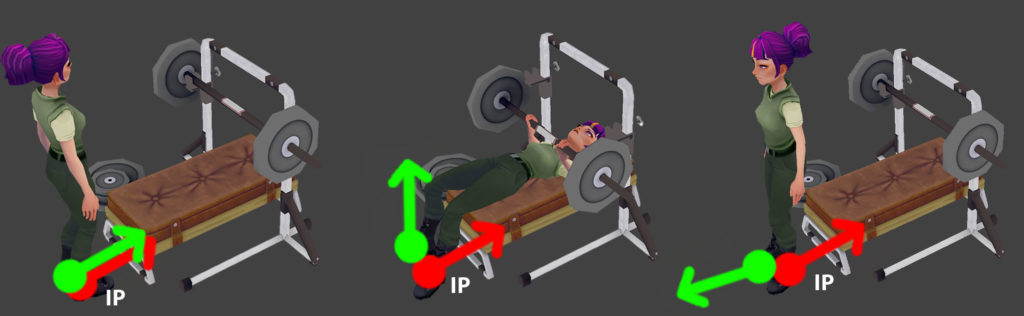

In our case, in the characters we have two closely related entities:

- The artificial intelligence agent represented by the red arrow. It is the logical entity of AI. Basically a directional vector that moves through space from one point to another.

- The visual 3D model represented by the green arrow. It is the character itself, what the player sees. It shares the position and orientation of the agent (red), except when taking actions. At that moment the two entities become detached and they will synchronize their position again later.

We can see how the character on the left of the image is in action playback behavior. When playing the animation of the character running through the obstacle course, the agent (red) and the 3D model (green) have been separated.

On the right side of the image we can see how the character is in navigation behavior. Its two entities remain synchronized.

In the next image we can see the relationship that exists between the navigation behaviour and the action playback behaviour and how they change from one to another:

- The character at the bottom right has decided that he wants to exercise on the bench press (even though the machine is in use at the moment). Then, the AI calculates a route to get to that object. The result is a route from the point of origin (O) to the interaction point (IP) of the object.

- The character will remain in navigation behavior, playing a walking animation and following the pre-calculated route.

- Once the character is at the interaction point (IP), with the proper position and orientation to start the animation, he will go into action playback behavior. Then, he will start to perform the bench press animation.

When the bench press animation begins, this is what happens:

- The character starts from the point of interaction with his two synchronized entities.

- Performs the animation until it ends.

- The character ends the animation and his drawing entity is in a different position and direction than the artificial intelligence agent.

- The artificial intelligence agent overwrites his position to adopt the same position as the drawing model. This way, they remain synchronized and the character is ready to continue walking in the direction he’s facing, without any jump in his animations or positions.

Sometimes, in some animations, the offset in position and rotation between the two entities involves compensating both movement and rotation. So, the synchronization must be done in six different axes to match the two entities.

How everything comes alive inside the game

One of the things I’m always thinking about is the amount of work that goes into everything related to the characters. From their generation, to the methods used to move them around the 3D map and make them interact with objects in a realistic way. And then the player sees all that from a quite distant perspective.

But, in the end, all that work counts: those facial animations, the characters moving their small fingers, the micro gestures of some parts of the body… All those small details are what really breathes life into the game, once the player sees the final result with everything happening at the same time

I hope you enjoyed this look at the animations in One Military Camp :). Don’t forget to wishlist and follow the game on Steam! It will give us a nice, warm and fuzzy feeling, and you won’t miss the next entries of this Development Diary! (and we have lots of cool things to show, trust us 😉 )

https://store.steampowered.com/app/1743830/One_Military_Camp/

See you soon, soldiers!

—————

Written by Miguel García (Creative Director of Abylight Barcelona)